How can you easily add security and privacy to the software you set up?

Almost every week we learn that yet another major company has detected a security breach with the loss of huge amount of individuals’ personal information. It is usually some time before we learn exactly how that happened; sometimes we never do! But it often turns out with such events that the root cause boils down to one thing: somebody built, installed or changed some software without thinking through the security and privacy implications.

And these days that’s not just programmers: anyone who sets up a piece of software, even if it’s just a Facebook account, is a ‘software builder’ and may have security or privacy concerns in what they set up. So, when we are building software in this way, how are we to make good on our responsibilities? How are we to think through security and privacy implications?

One might think the best approach would be to ask a security expert for the answer. Security experts are certainly valuable, but they’re also rare; there’s less than one security expert in the UK to every five professional software developers and to every hundred ‘software builders’, and they are correspondingly expensive. Security experts who can work constructively with software builders are rarer still.

And, of course, the last thing we all want is to spend a lot of time on security; security is important but rarely the main purpose of the systems we work with. So, we need an easy and low-effort way to figure out, without expert support, what to do about security and privacy.

At Lancaster university we have been researching this topic for five years, taking the security best practice from security experts to make it generally accessible. The approach, we found, is simple:

Ideate – Prioritise – Mitigate

Let’s illustrate this with an example. The other day I saw a plea from a colleague organising an international online conference on a politically sensitive topic: please could someone recommend a security expert for the event? Well, I thought, there may be people in the world with the job title ‘Expert on security and privacy for online conferences’, but they’d be hard to find and harder still to contract for a single conference. I suggested the approach above as an alternative, and we did the following.

- Ideate: We collected in an online meeting several stakeholders—the organiser, the administrator who’d be managing all the ‘paperwork’, myself and another expert on online events—around a whiteboard, and we brainstormed the topic ‘What security or privacy things could go wrong?’, with everyone putting notes with ideas on the board. That generated lots of possible issues: ‘what if we see a participant taken ill?’; ‘could participants in some countries be in danger if they express unpopular views’, and many others.

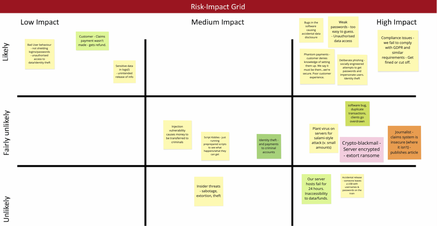

- Prioritise: Next, we moved the post-its around, putting similar ones near each other and removing duplicates. After that, each of us took three red dots and three black dots, and put our red dots next to the issues we thought most likely to happen; and our black dots next to the ones we thought most damaging. Then we moved the post-its highlighted in this way to a three-by-three grid, similar to the picture here.

- Mitigate: Now we knew the most important issues to deal with; they were the ones towards the top right. So, my conference organiser colleague then thought up ways to address them (‘mitigate’ them), and spent the limited ‘budget’ of time and money only on addressing the security and privacy things that mattered. This is where the 80:20 rule scores; focussing on the most important 20% of issues dealt with 80% or more of the security and privacy impact.

The whole process took only a couple of hours. Of course, we may have missed issues, we may have estimated a risk or impact wrongly, and my colleague might have devised ineffective mitigations; even security experts who take days over much more complex security processes often do that. But what we did was a great deal better than not doing it, and was a responsible ‘security best practice’ way (as required by law) to approach the problem.

Oh, and the conference was a success!

Could you do similar for your software building projects?