16 June 2025

Nine reasons why organisations should worry about continuity through software disaster...

01 May 2025

Why Agile teams must each devise their own, disciplined, development process to suit their situation and concerns...

10 February 2025

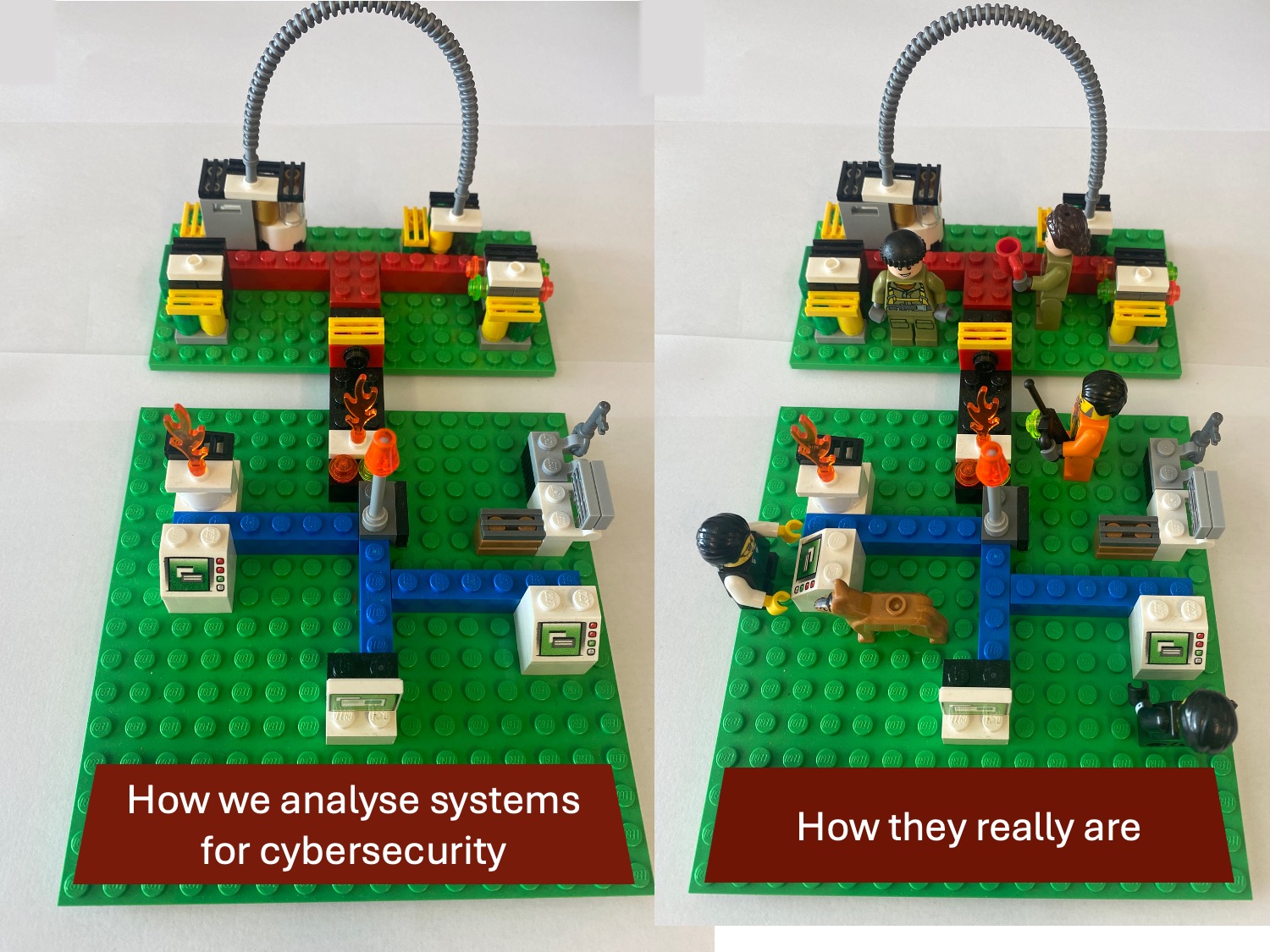

Our greatest need as a society is to limit the damage of disasters such as successful cyberattacks. Find out how human-centred system resilience will address this.

16 October 2024

How should we prepare for future cybersecurity risks to our critical national infrastructure? What should we do to avoid being taken by surprise?

03 November 2023

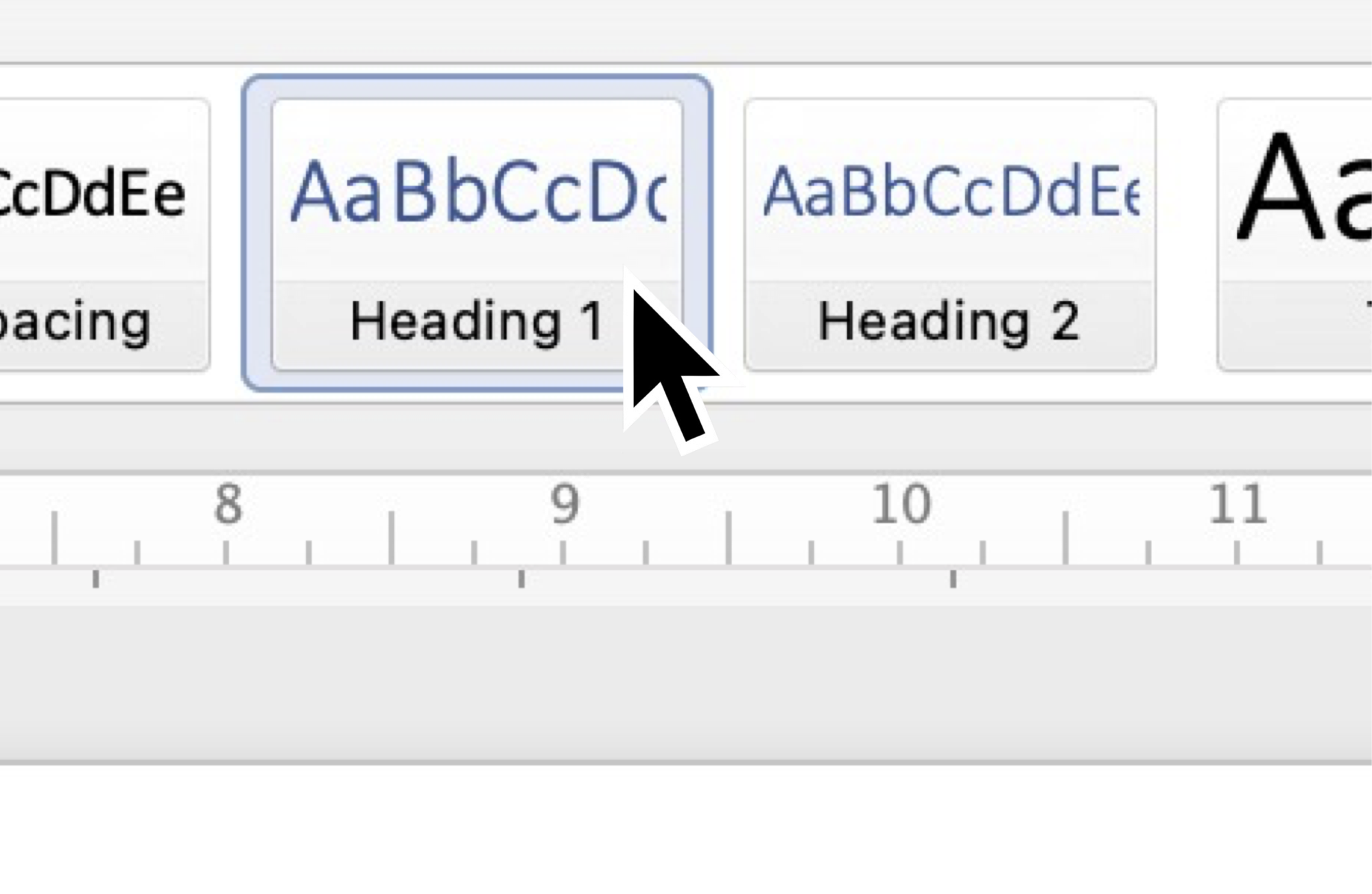

Are you creating or working on a Word template, or trying to understand how an existing one works? Here’s some superpower information you definitely need to know.

Hipster · 11 September 2023

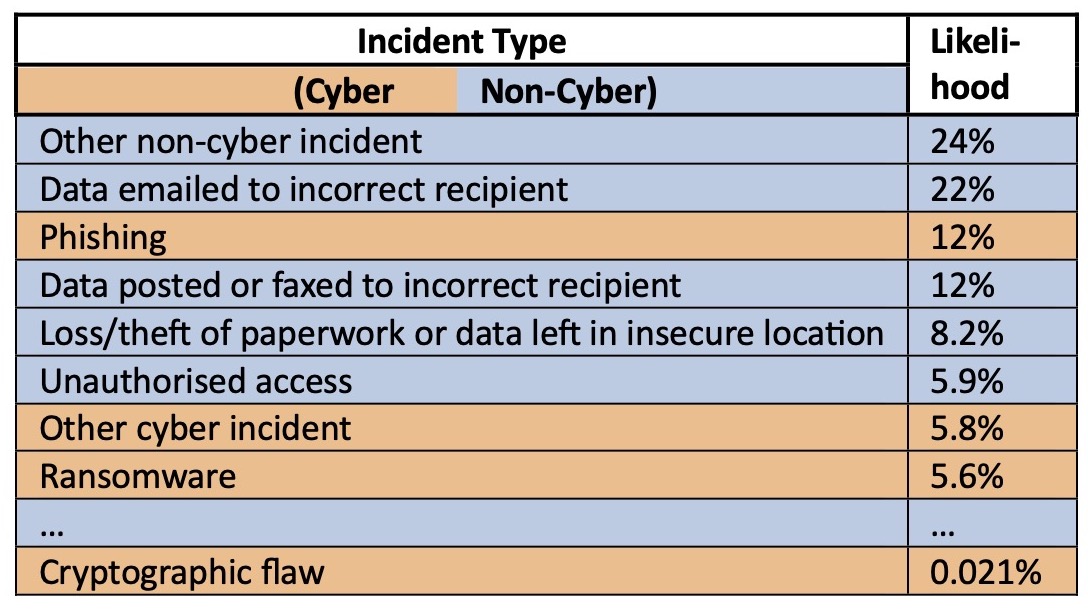

Security and privacy problems are not just hackers. Overlooked everyday risks can multiply fast!

Hipster · 02 February 2023

How the concept of Absorptive Capacity can help us get value out of cybersecurity in product innovation.